Table Of Contents

Conditional Uniqueness with Partial Indexes Beginner

From the Editors

On Book writing and upcoming speaking engagements

We are on the final stretch of our book writing adventure. All the chapters are done and more or less finalized. We are now going over the proofs of the chapters making last minute corrections before print. Hopefully we'll see the printed version before end February. It's been a long 1.5+ years. I was really hoping we'd be published before Leo's 40th, but his 40th came and went. Though looks like we'll make it before mine with 5 - 6 months to spare. On the bright side, I guess if we write a book again, we'll know what to expect.

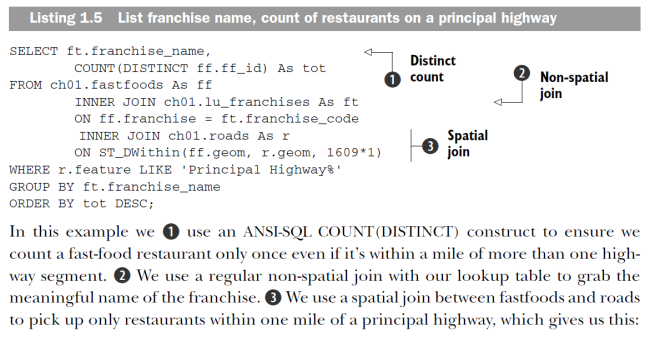

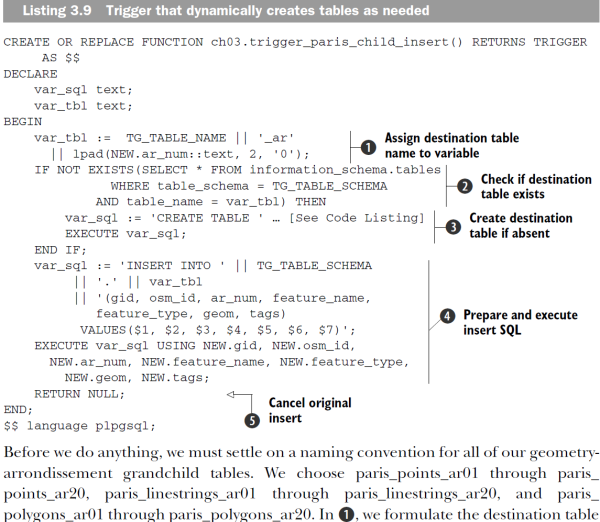

I really love the Manning code annotation style. Here are some snapshots of some from PostGIS in Action. We have just the black and white prints of some of the chapters so we can make sure the printed figures will look okay. The e-book version will be in color, but sadly the printed will be in black and white.

In February, we'll be speaking in 2011 North Carolina Geographic Information Systems Conference, Raleigh, NC USA and visiting a long-time friend from our college days:

- PostGIS 2.0 Raster and 3D Support Enhancements (GIS Goes 3D Special Track) Friday February 18th 8:30 - 10:00 AM

- Cross Comparison of Spatially Enabled Databases: PostGIS, SQL Server and JAvaSPAtial (JASPA) (GeoJenga How to Stack your Apps Track) - Friday February 18th 10:30 - 12:00 PM

PostgreSQL Q & A

Reverse String Aggregation: Explode concatenated data into separate rows In PostgreSQL, SQL Server and MySQL Beginner

In our last article we talked about String Aggregation implementing in PostgreSQL, SQL Server, and MySQL. This task is one that makes purist relational database programmers a bit squeamish. In this article we'll talk about the reverse of that, how do you deal with data that someone hands you delimeted in a single field and that you are asked to explode or re-sort based on some lookup table.

What are the benefits of having a structure such as? :

p_name | activities

--------+--------------------------------

Dopey | Tumbling

Humpty | Cracking;Seating;Tumbling

Jack | Fishing;Hiking;Skiing

Jill | Bear Hunting;Hiking

This brings us to the topic of, what is wrong with this model? It can be slow because the LIKE condition you have can't easily take advantage of an index unless using a full text index so not ideal where this is the primary filtering factor. It's also prone to pollution because you can't easily validate that the values in the field are in your valid set of lookups or if your lookup changes, the text can be forced to change with a CASCADE UPDATE/DELETE RULE etc. In cases where this is of minor consequence which is many if referential integrity is not high on your list of requirements, this design is not bad. It might make a purist throw up but oh well there is always dramamine to fall back on. As long as you have done your cost benefit analysis, I don't think there should be any shame of following this less than respected route.

While you may despise this model, it has its place and it's a fact of life that one day someone will hand it to you and you may need to flip it around a bit. We shall demonstrate how to do that in this article.

Setting up the structure

We'll have a table called lu_activities that lists the possible activities and our table of peopel with a column to hold the activities.

CREATE TABLE lu_activities(activity varchar(50) PRIMARY KEY, category varchar(50));

INSERT INTO lu_activities(activity,category) VALUES ('Bear Hunting', 'Outdoor');

INSERT INTO lu_activities(activity,category) VALUES ('Cracking', 'Other');

INSERT INTO lu_activities(activity,category) VALUES ('Fishing', 'Outdoor');

INSERT INTO lu_activities(activity,category) VALUES ('Hiking', 'Outdoor');

INSERT INTO lu_activities(activity,category) VALUES ('Skiing', 'Outdoor');

INSERT INTO lu_activities(activity,category) VALUES ('Tumbling', 'Other');

CREATE TABLE people(p_name varchar(75) PRIMARY KEY, activities text);

INSERT INTO people(p_name, activities) VALUES('Jack','Fishing;Hiking;Skiing');

INSERT INTO people(p_name, activities) VALUES('Jill', 'Bear Hunting;Hiking');

INSERT INTO people(p_name, activities) VALUES('Humpty','Cracking;Seating;Tumbling');

INSERT INTO people(p_name, activities) VALUES('Dopey', 'Tumbling');

Question 1: Converting a field to rows and sorting

You have a table of people and a field for each person that lists all their activities separated by ;. You luckily also have a lookup table that defines all the possible activities you care about. You want to explode this out into separate records. How do you do this in PostgreSQL, MySQL, and SQL Server.

We want our output to look like this:

-- We want out output to look like --

p_name | activity | category

--------+--------------+----------

Dopey | Tumbling | Other

Humpty | Cracking | Other

Humpty | Tumbling | Other

Jack | Fishing | Outdoor

Jack | Hiking | Outdoor

Jack | Skiing | Outdoor

Jill | Bear Hunting | Outdoor

Jill | Hiking | Outdoor

Having a lookup table makes this excersise fairly trivial. We can use more or less the same solution across these 3 databases. We use a left join to guarantee we will include everyone even if they have no activities.

-- PostgreSQL all versions

SELECT p.p_name, a.activity, a.category

FROM people As p

LEFT JOIN lu_activities AS a ON(';' || p.activities || ';' LIKE '%;' || a.activity || ';%')

ORDER BY p,p_name, a.category, a.activity;-- SQL Server 2005+

-- (for SQL Server 2000 you would replace varchar(max) with varchar(3000) or something along that --

SELECT p.p_name, a.activity, a.category

FROM people As p

LEFT JOIN lu_activities AS a ON(';' + CAST(p.activities As varchar(MAX)) + ';' LIKE '%;' + a.activity + ';%')

ORDER BY p.p_name, a.category, a.activity;

-- MySQL NON-ANSI looks like below (ANSI enabled looks like PostgreSQL solution) --

SELECT p.p_name, a.activity, a.category

FROM people As p

LEFT JOIN lu_activities AS a ON(CONCAT(';' , p.activities , ';') LIKE CONCAT('%;' , a.activity , ';%'))

ORDER BY p.p_name, a.category, a.activity;

Question 2: Converting single row into 2 rows one column for Outdoor activities and one for Other

I'll leave this one as an exercise for the reader. The basic solution is to combine Question 1 with the solution in String Aggregation implementing in PostgreSQL, SQL Server, and MySQL.

PostgreSQL Q & A

Conditional Uniqueness with Partial Indexes Beginner

You have a system of products and categories and you want a product to be allowed to be in multiple categories, but you want a product to only be allowed to be in one main category. How do you enforce this rule in the database?

Some people will say -- why can't you just deal with this in your application logic. Our general reason is that much of our updating doesn't happen at our application level. We like enforcing rules at the database level because it saves us from ourselves. We are in the business of massaging data. For this particular kind of example we wanted to make sure the database would provide us a nice safety net so that we wouldn't accidentally assign a product in two main categories.

AnswerThere are two approaches we thought of. One is the obvious have a primary category column and a bridge table that has secondary categories. That is an ugly solution because when you do a query you have to do a union and always treat the secondary categories as different from the main. For most use-cases we don't usually care about distinguisihing primary from secondary category.

The solution we finally settled on was to have one bridge table with a boolean field for if its the main category. We enforce the only one main category requirement using a partial index. Now not all databases support partial indexes This is one major value of using PostgreSQL that you have so many more options for implementing logic.

So how does the partial index solution look: NOTE for simplicity, we are leaving out all the complimentary tables and the foreign key constraints that we also have in place.

CREATE TABLE products_categories

(

category_id integer NOT NULL,

product_id integer NOT NULL,

main boolean NOT NULL DEFAULT false,

orderby integer NOT NULL DEFAULT 0,

CONSTRAINT products_categories_pkey PRIMARY KEY (category_id, product_id)

);

CREATE UNIQUE INDEX idx_products_categories_primary

ON products_categories

USING btree

(product_id)

WHERE main = true;

Testing it out. It saves us and gives us a nice informative message to boot.

-- now we test our safety net

INSERT INTO products_categories(category_id, product_id, main)

VALUES (1,2,true), (3,2,false), (3,3,true), (4,2,true);

--which gives us error

ERROR: duplicate key value violates unique constraint "idx_products_categories_primary"

DETAIL: Key (product_id)=(2) already exists.

Basics

SQL Server 64-bit Linked Server woes Beginner

We were setting up another SQL Server 2005 64-bit where we needed a linked server connection to our PostgreSQL 9.0 server. This is something we've done before so not new and something we documented in Setting up PostgreSQL as a Linked Server in Microsoft SQL Server 64-bit. What was different this time is that we decided to use the latest version of the new PostgreSQL 64-bit drivers now available main PostgreSQL site http://www.postgresql.org/ftp/odbc/versions/msi/. Sadly these did not work for us. They seemed to work fine in our MS Access 2010 64-bit install, but when used via SQL Server, SQL Server would choke with a message:

Msg 7350, Level 16, State 2, Line 1Cannot get the column information from OLE DB provider "MSDASQL"

If you tried to do a query with them. You can however see all the tables via the linked server tab.

The query runs on PostgreSQL fine and we can see it in the logs. The odbc log looks fine, so seems to be an issue when its coming back to SQL Server. So for the time being until we figure out if its an issue on our end or something wrong with the published 64-bit ODBC drivers, we'll be using these http://code.google.com/p/visionmap/wiki/psqlODBC

64-bit Official PostgreSQL drivers

The odbc 64 bit installs both a ANSI and Unicode. We tried both and both gave same errors

EXEC master.dbo.sp_addlinkedserver @server = N'PGSERVER', @srvproduct=N'PostgreSQL',

@provider=N'MSDASQL', @provstr=N'Driver=PostgreSQL ANSI(x64);uid=pguser;port=5432;Server=localhost;database=mydb;pwd=pgpassword'

EXEC master.dbo.sp_addlinkedserver @server = N'PGSERVER', @srvproduct=N'PostgreSQL',

@provider=N'MSDASQL.1', @provstr=N'Driver=PostgreSQL ANSI(x64);uid=pguser;port=5432;Server=localhost;database=mydb;pwd=pgpassword'

EXEC master.dbo.sp_addlinkedserver @server = N'PGSERVER', @srvproduct=N'PostgreSQL',

@provider=N'MSDASQL', @provstr=N'Driver=PostgreSQL Unicode(x64);uid=pguser;port=5432;Server=localhost;database=mydb;pwd=pgpassword'

EXEC master.dbo.sp_addlinkedserver @server = N'PGSERVER', @srvproduct=N'PostgreSQL',

@provider=N'MSDASQL.1', @provstr=N'Driver=PostgreSQL Unicode(x64);uid=pguser;port=5432;Server=localhost;database=mydb;pwd=pgpassword'Vision Map 64-bit drivers

The visionmap on just installs one so registration looks like (this one works fine)

EXEC master.dbo.sp_addlinkedserver @server = N'PGSERVER', @srvproduct=N'PostgreSQL',

@provider=N'MSDASQL', @provstr=N'Driver=PostgreSQL 64-bit ODBC Drivers;uid=pguser;port=5432;Server=localhost;database=mydb;pwd=pgpassword'

Our connection strings -- using the visionmap one look like:

-- The visionmap on just installs one so registration looks like (this one works fine)

EXEC master.dbo.sp_addlinkedserver @server = N'PGSERVER', @srvproduct=N'PostgreSQL',

@provider=N'MSDASQL', @provstr=N'Driver=PostgreSQL 64-bit ODBC Drivers;uid=pguser;port=5432;Server=localhost;database=mydb;pwd=pgpassword'

Test query

Our query looked something like below, but any query we used gave similar error with the PostgreSQL site 64-bit drivers.

SELECT a.*

FROM OPENQUERY(PGSERVER, 'SELECT table_name FROM information_schema.tables') As a;

Product / Service Contrast Compare

Why choose or not choose PostgreSQL?

Many of our customers ask us this question so we thought we'd lay down our thoughts. The last couple of our articles have been how to do this and that in PostgreSQL, SQL Server, MySQL or having PostgreSQL coexist with an existing SQL Server install. A major reason for that is that in many of our projects we have a choice of what database to choose for a new piece of an application as long as it can play nicely with the existing infrastructure. Our core database competencies are still PostgreSQL, SQL Server, and MySQL with it leaning more toward PostgreSQL each day. We are perhaps somewhat unique in the PostgreSQL community in that Oracle never comes into our equation of decisions (though Oracle and PostgreSQL are perhaps more similar than the others). Oracle is too expensive for most of our clientele so it's a non-issue, and when our clients do have Oracle -- it's thrust upon them by thier ERP/CRM vendor and is essentially off limits to them.

Historically a lot of these new projects we have favored SQL Server over any of the other databases we use for a couple of reasons:

- The clientele we service are predominantly Windows shops to the tune of about 70-80%. These people already have a SQL Server on site and feel very comfortable with it so why install yet another database? The issue of SQL Server cost also isn't a concern for them because they've already invested in the licensing, backup infrastructures, and training to manage their SQL Server boxes.

- The SQL Server security management -- if you are running a Windows shop and assigning permissions based on Windows groups is still much easier with SQL Server. I know PostgreSQL has LDAP/SSPI and all that but most people assign permissions based on Windows user groups not users and they want one place to do that.

- The SQL Server management wizards are nice plus the reporting services, SSIS thrown in. Management tools, maintenance plans are lifesavers for diagnosing problems, doing automated tunining and they are right there and easy to train people how to use. One can argue Reporting Services, SSIS, Maintenance plan wizards may not be the best of the breed and are not really part of the database. The fact they are packaged in means no need to look for other tools. As much as people like to say they like choices, they more value being relieved of the task of making decisions than having a better menu of options. That's why we have approved stacks.

- SQL Server drivers already packaged with Windows. While PostgreSQL ODBC drivers are a simple 5 minute or less install, they still require installation and a scary thing when you are talking about deploying on 500 or more computers.

And of course we've had those clients who are slowly finding out about open source and their first thought is MySQL. Why:

There are still far more MySQL consultants than PostgreSQL consultants which means a MySQL consultant is more replaceable than a PostgreSQL consultant. Replaceability is the most important criteria for many customers, particularly with new engagements. There is an old saying that goes "If you don't have competition, you don't have a market." This works on a myriad of levels. On the one hand Oracle, DB2, SQL Server, MySQL, PostgreSQL are what I call cut throat competitors. The MySQL, SQL Server, PostgreSQL, or Oracle consultant next door fighting for the same customers as you are is an enemy, but they are all relational and the existence of the competition ensures a vibrant genetic relational pool and more importantly the growth of the customer base.

In a NoSQL argument -- they shed their differences and unify against the NoSQL common enemy :). Same goes for PostGIS, DB2 Spatial blades, Oracle Spatial, SQL Server 2008/2010 spatial. Most spatial DBAs I know have one favorite pastime in common: comparing battle stories about ArcSDE :). PostGIS very existence depends on the competition it has with its other spatial database brethren. An Oracle Spatial / DB2 Spatial / SQL server spatial consultant has a much better idea of PostGIS merits than a general PostgreSQL consultant and we all have generally the same kind of customer base. The spatial DBAS are probably more close knit and respectful of each other than other competitive groups just because they are a smaller highly specialized group and are more likely to have to cross database boundaries.

The fact that I can say SQL Server /PostgreSQL can replace one another or that the knowledge in one is same as the knowledge in another is a huge selling point because it means people don't have to be so concerned that there are more SQL Server than PostgreSQL consultants and so on. So the fact you are not unique makes you more attractive. I would go as far as to say if you are very unique, you want to play it down a bit so you don't sound too different from the rest of the pack. Once the sell is made, then you can pull out the uniqueness gun to keep the sale :).

- You want to take advantage of Drupal, Wordpress and all those things you've heard about. Those really aren't supported well on SQL Server. They are supported in PostgreSQL a bit better, but still MySQL owns the lions share in that regard. You need an expert on Drupal or Wordpress to help you out, chances are they don't know anything but MySQL and probably wouldn't touch PostgreSQL or SQL Server with a 10 foot pole.

- MySQL has historically had better support on Windows than PostgreSQL, of course this has changed considerably so is more of a historical footnote with many lingering consequences.

There are a couple of huge reasons why we are choosing PostgreSQL more and more over the others these days or at worst creating infrastructures that are a cross combination.

- A lot of our clients are beginning or thinking about deploying on cloud (or VM) servers for many reasons: to make read-only distributions of their in house SQL Server / PostgreSQL data or for redundancy. PostgreSQL on the cloud is way cheaper than SQL Server on the cloud, and for more advanced queries, PostgreSQL is a much easier drop-in replacement for SQL Server than MySQL is. With the upcoming SQL/MED enhancements in PostgreSQL 9.1 -- it might even be a better datawarehouse tool than any of the others with the ability to better aggregate data from various sources.

- Some of our work involves processing data in PostgreSQL -- e.g doing spatial transformations with PostGIS (that SQL Server 2005 or 2008 can't do), and various other gyrations (not just for spatial but for financial apps and automated data categorization as well) that are made simpler with PostgreSQL array, advanced built-in text manipulation support (like regex,soundex, trigrams, levenshtein etc), and pushing this data back to a SQL Server database which is the database that drives much of the internal business processing. Most of the reason for not pulling out the existing SQL Server system is that it's more cost effective and expedient to push this data back (or using SQL Server's linked query support to query into the PostgreSQL server) than rewrite these applications to use something other than SQL Server.

- With PostgreSQL you own your stack. If you use MySQL you need to be concerned about how you are deploying it and distributing or pay Oracle licensing ransom. Much less concern with SQL Express, but you need to worry about not going over the memory, processor, and database size limits. With PostgreSQL you can completely own it and can distribute it with the rest of your custom stack. This is really critical when you are building a vertical market product that you are deploying at client sites and need to include the cost of the database in your pricing.

- We have a couple of interesting projects we are working on now where being able to deploy quickly, owning your own stack, and having a very extensable system is critical. PostgreSQL is a perfect fit for that set of criteria. Much of this stuff you just can't get on SQL Server or MySQL (e.g. integration with Python, Advance spatial support, deployment on any OS, advanced string support etc) and the subset you can get is usually the newer versions like SQL Server 2008/2010 that many of our clients haven't shelled out money for and not sure if they want to.