Sunday, September 30. 2012

Printer Friendly

This year has been action packed for us both for good and bad. On the positive side we've gotten more involved in PostGIS and PostgreSQL work on many fronts in writing, consulting, and general project involvement.

On the more somber side, we were stricken with personal tragedies this year.

Continue reading "Writing and other Happenings"

Wednesday, September 26. 2012

Printer Friendly

James Fee and Paul Ramsey chatting about PostGIS/PostgreSQL, PostSQL, why PostGIS built ontop of PostgreSQL, Spatial in IT, GeoGit, sports, and the good ol'days of analog technology.

Sunday, September 23. 2012

Printer Friendly

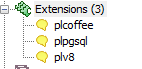

Unfortunately we missed Postgres Open this year, but we did catch some of the slides. One of them was Embracing the web with JSON and PLV8 by Will Leinweber of Heroku. He had a great slide deck with a lot of interesting points. One surprising for us was that even in tasks that both PL/PgSQL and PL/V8 can do, PL/V8 is sometimes faster as demonstrated in his slides:

#51 thru #54

Another interesting point he covered which is the topic of this article is the ease with which you can build PLV8 functions from javascript libs on the web. In particular JSON:Select library.

In one article we demonstrated a JQuery app with PLV8 and one of JQuery's foundations is the CSS like selector syntax it provides for JSON and HTML document elements which allows you to drill down a document using CSS3 style referencing, much like what xpath does for xml. One of the glaring features missing in PostgreSQL 9.2 basic JSON support is a function to navigate a JSON document comparable to the PostgreSQL built-in xpath function for xml.

So how do we get this json selector goodness available to us in the database? Like all good monkeys, we copy/emulate it.

Continue reading "Using PLV8 to build JSON selectors"

Saturday, September 22. 2012

Printer Friendly

We've recompiled our PL/V8 for windows against PostgreSQL 9.2.0 (both 32-bit and 64-bit) with latest PL/V8 code. This includes the additional LiveScript language, which you can use by doing:

CREATE EXTENSION plls;

New release also includes some bug fixes such as Crash case with non-array in array returning function.

We have also put up binaries and installers for PostGIS 2.0.1 for PostgreSQL 9.2 (32-bit and 64-bit) downloadable from PostGIS website. We haven't deployed the installers to Application StackBuilder yet since we are doing some testing before we release to the masses.

Tuesday, September 18. 2012

Printer Friendly

One of the great benefits of building a project like PostGIS ontop of PostgreSQL is that when PostgreSQL stands higher, PostGIS becomes that much more powerful without lifting a finger. PostgreSQL development speed has been accelerating which is pretty exhilarating from a dependent project Point of view.

Now that PostgreSQL 9.3 is in full-swing we wanted to itemize some things we see coming in this release that will have a great impact on PostGIS use and development. We'd also like to point out

items we don't yet see listed that the PostGIS development team has been long waiting for. We'll go over both in this article.

Continue reading "PostgreSQL 9.3 Enhancements and Wishes from a PostGIS Developer POV"

Monday, September 17. 2012

Printer Friendly

A couple of people have expressed concern that we have not released a PostGIS 2.0.1 for Windows 9.2. I guess 9.2 really makes people want to jump and scream for joy. Even if they don't deploy on Windows, Windows is a popular development platform to kick the tires. We originally were planning to not release one and were hoping people would not notice and just start using the PostGIS 2.1.0SVN. That plan evidently did not work. So Yes, we will be releasing a PostGIS 2.0.1 for 9.2 both 32-bit and 64-bit probably late this week. We've got some regression kinks showing in the 9.2 64-bit chain that we are troubleshooting before we release to the masses.

We've been busy beefing up the PostGIS testing and interim build infrastructure to replace the old PostGIS Hudson CentOS build bot. If you are in a super rush and really need a 2.0 micro, you can use the PostGIS 2.0.2SVN binaries, generated by Winnie the PostGIS windows build bot. If you hang around the PostGIS IRC channel, you may have stumbled on Debbie, Winnie's younger and more vocal sister (Debian 64-bit hosted on GoGrid) that does all the document builds and tar balls currently. We are hoping to have Debbie eventually pull PostgreSQL source directly from Git PostgreSQL 9.3, so we can make sure we don't introduce issues for 9.3 along the way and can catch them early.

Printer Friendly

Chris Travers has an interesting series going on about the Object-Oriented and Polymorphic like features that PostgreSQL has built-in. It reminded me of this syntax

I have rarely seen used with PostGIS, that is perfectly valid and may be more familiar looking to Oracle and SQL Server spatial folks. It looks something like this, though sadly

will only work on unary functions.

SELECT ('LINESTRING(1 2, 3 4)'::geometry).ST_Length;

More common though, if you were to have a table of say geography objects:

CREATE TABLE pois(gid serial primary key, geog geography(LINESTRING,4326));

INSERT INTO pois(geog)

VALUES ('LINESTRING(-164.2559 54.0558,-162.0943 54.33243)'::geography)

, ('LINESTRING(-46.2559 54.0558,-46.0943 54.33243, -47.1005 55.33243)'::geography);

SELECT (geog).ST_Length As len, (geog::geometry).ST_NPoints As n_pt

FROM pois;

If you notice though, no keystrokes were saved. We've simply changed the order of the parenthesis. Damn those ().

Friday, August 24. 2012

Printer Friendly

If you do a lot of web-based GIS applications, a common desire is to allow a user to

draw out an area on the map and then do searches against that area and return back a FeatureCollection

where each feature is composed of a geometry and attributes about that feature. In the past the format

was GML or KML, but the world seems to be moving to prefer JSON/GeoJSON. Normally you'd throw

a mapping server that talks Web Feature Service

, do more or less with a webscripting glue, or use a Webservice

such as CartoDb that lets you pass along raw SQL.

In this article we'll demonstrate how to build GeoJSON feature collections that can be consumed by web mapping apps.

using

the built in JSON functions in PostgreSQL 9.2 and some PostGIS hugging.

Even if you

don't use PostGIS, we hope you'll come away with some techniques for working with

PostgreSQL extended types and also how to morph relational data into JSON buckets.

Continue reading "Creating GeoJSON Feature Collections with JSON and PostGIS functions"

Wednesday, August 15. 2012

Printer Friendly

Lately I'm reminded that one person's feature is another person's frustration. I've been following Paul's PostGIS Apologia detail about why

things are done a certain way in PostGIS in response to Nathaniel Kelso's: A friendlier PostGIS? Top three areas for improvement.

I've also been following Henrik Ingo: comparing Open Source GIS Implementation to get

a MySQL user's perspective on PostGIS / PostgreSQL. Jo Cook has some interesting thoughts as well in her PostGIS for beginners amendment to Paul's comments.

I have to say that both Nathaniel, Henrik, Jo and commenters on those entries have overlapping frustrations with PostgreSQL and PostGIS. The number one frustration is caused by how bad a job we do

at pointing out avenues to get a friendly installation experience. I do plan to change this to at least document the most popular PostGIS package maintainers soon.

One of the things that Henrik mentioned was his frustration with trying to install PostGIS via Yum PostgreSQL repository and in fact not even knowing about the PostgreSQL Yum repository

that has current and bleeding versions of PostgreSQL. I was surprised he didn't know because as a long-time user of PostgreSQL, I dismissed this as common knowledge. This made me realize just how out of touch

I've become with my former newbie self and I consider this a very bad thing. I was also very surprised about another feature he complained about - CREATE EXTENSION did not work for him because he accidentally

installed the wrong version of PostGIS in his PostgreSQL 9.1. The main reason for his

frustration was something I thought was a neat feature of PostGIS. That is that PostGIS is not packaged into PostgreSQL core and you can in fact have various versions

of PostGIS installed in the same PostgreSQL cluster. This unlike the other OGC spatial offerings of other databases (SQL Server, Oracle, MySQL) allows the PostGIS dev group to work on their own time schedule largely apart

from PostgreSQL development group pressures.

It also means we can take advantage of breaking changes introduced in PostGIS 2.+ for example without impacting existing apps people have running 1.5 and also allow people

to take advantage of newer features even if they are running an earlier PostgreSQL version.

Continue reading "Feature or Frustration"

Sunday, August 12. 2012

Printer Friendly

There has been a lot of talk lately about schemaless models touted by NoSQL groups and how PostgreSQL fits into this New world order.

Is PostgreSQL Object-Relational? Is it Multi-Model. We tend to think of PostgreSQL as type liberal and it's liberalness gets more liberal with each new release. PostgreSQL is fundamentally relational, but has little bias about what data types define each column of related tables. One of PostgreSQL great strengths is the ease with which different types can coexist in the same table and the flexible index plumbing and plan optimizer it provides that allows each type, regardless of how wild, to take full advantage of various index strategies and custom index bindings. Our 3 favorite custom non-built-in types we use in our workflow are

PostGIS (of course), LTree (Hierarchical Type), and HStore (Key-Value type). In some cases, we may use all 3 in the same database and sometimes the same table - where we use PostGIS for spatial location, LTree for logical location, and Hstore just to keep track of random facts about an object that are easier to access than having a separate related table and are too random to warrant devoting a separate column for each. Sometimes we are guilty of using xml as well when we haven't figured out what schema model best fits a piece of data and hstore is too flat of a type to work. The advent of JSON in PostgreSQL 9.2 does provide for a nested schema-less model similar to what the XML type offers, but more JavaScript friendly. I personally see JSON as more of a useful transport type than one I'd build my business around or a type you'd use when you haven't figured out what if any structure is most suitable for your data. When you have no clue what structure a piece of data should be stored, you should let the data tell you what structure it wants to be stored in and only then will you discover by storing it in a somewhat liberal fashion how best to retrofit in a more structural self-descriptive manner. Schemas are great because they are self-describing, but they are not great when your data does not want to sit in a self-described bucket. You may find in the end that some data is just wild and refuses to stay between the lines and then by all means stuff it in xml or json or create a whole new type suit it feels comfortable in.

Friday, August 10. 2012

Printer Friendly

In our last article, PL/V8JS and PL/Coffee JSON search requests

we demonstrated how to create a PostgreSQL PL/Javascript stored function that takes as input, a json wrapped search request. We generated the search request using PostgreSQL.

As mentioned, in practice, the json search request would be generated by a client side javascript API such as JQuery. This time we'll put our stored function to use in a real web app built using

PHP and JQuery. The PHP part is fairly minimalistic just involving a call to the database and return a single row back. Normally we use a database abstraction layer such as ADODB or PearDB, but this is so

simple that we are just going to use the raw PHP PostgreSQL connection library directly. This example requires PHP 5.1+ since it uses the pg_query_param function introduced in PHP 5.1.

Most of the work is happening in the JQuery client side tier and the database part we already saw. That said the PHP part is fairly trivial to swap out with something like

ASP.NET and most other web server side languages.

Continue reading "PLV8JS and PLCoffee Part 2B: PHP JQuery App"

Thursday, August 09. 2012

Printer Friendly

PostgreSQL 9.2 beta3 got released this week and so we inch ever closer to final in another 2 months or so. One of the great

new features is the built-in JSON type and companion PLV8/PLCoffee languages that allow for easy processing of JSON objects.

One of the use cases we had in mind is to take as input a JSON search request that in turn returns a JSON dataset.

We'll use our table from PLV8 and PLCoffee Upserting.

Keep in mind that in practice the json search request would be generated by a client side javascript API such as our favorite JQuery, but for quick prototyping, we'll generate the request in the database with some SQL.

If you are on windows and don't have plv8 available we have PostgreSQL 9.2 64-bit and 32-bit plv8/plcoffee experimental binaries and instructions. We haven't recompiled against

9.2beta3, but our existing binaries seem to work fine on our beta3 install.

Continue reading "PLV8JS and PLCoffee Part 2: JSON search requests"

Saturday, July 21. 2012

Printer Friendly

Today's modern web application workflow in its simplest form looks something like this:

- Get dataset as JSON object usually using yet another JSON query object to pass the request using a javascript framework like JQuery/ExtJS/OpenLayers/Leaflet

etc.

- Make changes to JSON dataset object and send back to the web server.

- On webserver unravel the JSON object and save to respective database tables. This part is really yucky as it often involves the web application

server side language doing the unraveling and then yet another step of setting up stored procedures or other update logic to consume it.

We hate the way people build tiers

for the same reason Cartman hates lines at the amusement park.

Sure tiers are great for certain things like building connected microcosms, but most of the time they are overkill

and if applied too early make your application needlessly complicated. In the end all we care about is data: serving data, analyzing data, getting good data and everything else is just peacock feathers.

The introduction of JSON type support in PostgreSQL 9.2 and languages PL/V8 (PL/Javascript) and its Pythoness-like twin PL/Coffee

provides several options for bringing your data and application closer together since they have native support for JSON.

In this first part we'll demonstrate one: An upsert stored procedure that takes a single JSON object instead of separate args and updates existing data and adds missing records.

In later articles we'll show

you the front end app and also add a sprinkle of PostGIS in there to demonstrate working with custom types.

Continue reading "PLV8JS and PLCoffee Part 1: Upserting"

Monday, July 16. 2012

Printer Friendly

I have updated instructions on my gist page for building with PostgreSQL 9.4 Build v8 and plv8As mentioned in our previous article Building on MingW deploying on VC we often build on MingW and deploy on Windows servers running EDB distributed VC PostgreSQL builds

for extensions we want that don't come packaged. One of the new ones we are really excited about is the PL/V8 and PL/Coffee ones. Could we do it

and would it actually work on a VC build. YES WE CAN and yes it does. I HAZ Coffee and a V8:  . .

Here are some instructions we hope others will find useful. Even if you aren't on

Windows, you might still find them useful since MingW behaves much like other Unix environments.

If you are on windows, and just want to start using PLV8 and PLCoffee. We have binary builds for both PostgreSQL 9.2 Windows 32-bit (pg92plv8jsbin_w32.zip) and PostgreSQL 9.2 Windows 64-bit (pg92plv8jsbin_w64.zip) which you should be able to just extract into your PostgreSQL 9.2 beta windows install. We quickly tested with EDB VC++ builds and they seem to work fine

on standard VC++ PostgreSQL 9.2beta2 installs. We haven't bothered building for lower PostgreSQL, but if there is some interest, we'd be happy to try.

Continue reading "Building PLV8JS and PLCoffee for Windows using MingW64 w64-w32"

Sunday, July 15. 2012

Printer Friendly

We are the windows package maintainers of PostGIS. We build these packages using MingW chain of tools. For other packages we fancy that do not come packaged with the windows VC++ builds, we also build these under mingw. We've described some of these already in File FDW family. For windows 32 builds we build

with the commonly known MSys/Mingw32 chain (but an older version 1.0.11)

because of issues we have building with the newer msys/mingw 32.

For windows 64-bit installs, we build with the mingw-w64 chain and in fact

we like the ming-w64 chain so much that we plan to migrate our Mingw32 to mingw64. We have PostgreSQL 9.2 and PostgreSQL 9.3 successfully installing under the mingw-w64 for windows 32 just fine (older PostgreSQL we experience a winsock.h something or other error which we are working on troubleshooting. For 64-bit we use ming-w64 for building extensions for PostgreSQL 9.0-9.2 and soon 9.3 with some minor issues. Some people have asked us, why put yourself thru this torture? Why not just build on MS VC++ for everything? Originally we had started on mingw because PostGIS needed a Unix like environment to compile and run thru the battery of tests. This is still the case, though PostGIS is planning a CMake move with help from Mateusz Lostkot which hopefuly will provide a better cross-platform experience and allows us to integrate pgRouting (which already is on CMake). Paul Ramsey rewrote many of the regression test scripts to be completely Perl based and not require sh. The other reality is we just prefer mingw and can't really stomach having to work with VC++. I'll describe why and why not build with mingw and deploy on VC++ compiled PostgreSQL.

Continue reading "Building on MingW and deploying on VC compiled PostgreSQL Why and Why not"

|