Friday, March 25. 2011

Printer Friendly

PostGIS Mini Conference in Paris, France

There will be a PostGIS 1 Day mini conference in Paris June 23rd organized by

Oslandia and Dalibo.

For Details: PostGIS mini conference English Details

and PostGIS mini conference French Details.

Speaker submissions are due April 22nd. Main focus will be upcoming PostGIS 2.0 which gathering from newsgroup a lot of people are already bouncing around.

PGCon 2011 - Paul will make a show

Paul Ramsey (OpenGeo) will be speaking at PGCon 2011. PostGIS knows where you are?

and everyone will know where Paul is too.

Continue reading "PostGIS News"

Monday, March 14. 2011

Printer Friendly

Some people have asked us our thoughts on what the best cloud hosting provider is for them. The answer is as you would expect,

it depends. I will say right off, that our preferred at the moment is GoGrid, but that has more to do with our specific use-cases than GroGrid being absolutely better than Amazon. The reason we choose GoGrid most of the time over Amazon is

we know we need the server on all the time anyway, we run mostly windows servers, we like the real live e-Mail, phone, personalized support

they offer free of charge and

we absolutely need to have multiple public IPs per server since we have multiple SSL sites per server (and SSL unless you go for the uber *.domain version can't be done with one IP). GoGrid starts you off with 16 public ips you can distribute any way you like. Amazon is stingy with IPs,

and you basically only get one public per server unless I misunderstood.

In some cases just like when we are developing for a client and they are playing around with various speeds on various OS, Amazon EC is

a better option since you can just turn off the server and not incur charges. In GoGrid, you have to delete the server instead of just shutting it down.

The cloud landscape is getting bigger and more players coming on board which is good since it means you are less likely to be stuck with a provider and you have more bargaining options. We only have experience with GoGrid and Amazon EC, so we can't speak for the others. Other providers we'd like to try are SkyGone (specifically for PostGIS and other GIS hosting), RackSpace Cloud, etc. but we haven't used those so can't speak for them, but each

has their own little gotchas and gems in their offerings that makes them better suited for certain needs and out of the question for others. We are just talking about Cloud server hosting, not other services like cloud application services (like what Microsoft Azure offers), Relational Database Services Like (Amazon RDS (built on MySQL) or Microsoft SQL Azure (built on SQL Server 2008)), file server services, SasS cloud like SalesForce etc, though many cloud servers (e.g. both GoGrid and Amazon include some cloud storage space pre-packaged with their cloud server hosting plans).

I find all those other cloud offerings like database only hosting a bit scary, mostly because haven't experimented with them.

These are the key metrics we judge cloud server hosting plans by and sure there are more, but these are the ones that are particularly important to us when making decisions and what controls our decisions on which to deploy on. Keep in mind we work mostly with Small ISVs,new Dot coms, non-Profits that work with other non-Profits but need an external secure web application (SSL) to collect data. All that scaling and stuff we haven't really had much of a need

for and our clients running much larger servers are still leery of trusting the cloud for that because of lack of control of disk types, the pricing of larger servers etc. For those type of clients if we go with cloud, we'd probably choose GoGrid since they offer a combo plan using real servers and cloud servers.

I will say that for pretty intense PostGIS spatial queries with millions records of a range of geometry types and sizes (anywhere from single points to multipolygons with 20 to 80,000 or more vertices), we've been using GoGrid and been surprised how well the performance is on a modest Dual core 2GHz RAM running Windows 2008 (32-bit) - I'm talking queries that return 50 - 2000 records on a specified user drawn spatial region (out of a selection of 3 million records), simplify, transform on the fly,

return spatial intersections and all usually under 4-12 seconds (from generation of query to outputting on a web client). This is even with running the web server on the same box as the database server. We haven't run anything that intensive on Amazon EC instance so can't compare.

Note that GoGrid has their own chart comparing EC2 and Rackspace with their offering so you might want to check it out. I must also say that these are purely our opinions and we were not influenced by any monetary compensation to say them.

Continue reading "GoGrid and Amazon EC Cloud Servers compare"

Friday, February 25. 2011

Printer Friendly

Many of our customers ask us this question so we thought we'd lay down our thoughts.

The last couple of our articles have been how to do this and that in PostgreSQL, SQL Server, MySQL or having PostgreSQL coexist with an existing SQL Server install.

A major reason for that is that in many of our projects we have a choice of what database to choose for a new piece of an application as long as it can play nicely with the existing infrastructure.

Our core database competencies are still PostgreSQL, SQL Server, and MySQL with it leaning

more toward PostgreSQL each day. We are perhaps somewhat unique in the PostgreSQL community in that Oracle never comes into our equation of decisions (though Oracle and PostgreSQL are perhaps more similar than the others).

Oracle is too expensive for most of our clientele

so it's a non-issue, and when our clients do have Oracle -- it's thrust upon them by thier ERP/CRM vendor and is essentially off limits to them.

Continue reading "Why choose or not choose PostgreSQL?"

Monday, February 21. 2011

Printer Friendly

We were setting up another SQL Server 2005 64-bit where we needed a linked server connection to our PostgreSQL 9.0 server. This is something we've done before so not new and something we documented in

Setting up PostgreSQL as a Linked Server in Microsoft SQL Server 64-bit.

What was different this time is that we decided to use the latest version of the new PostgreSQL 64-bit drivers now available main PostgreSQL site http://www.postgresql.org/ftp/odbc/versions/msi/.

Sadly these did not work for us. They seemed to work fine in our MS Access 2010 64-bit install, but when used via SQL Server, SQL Server would choke with a message:

Msg 7350, Level 16, State 2, Line 1

Cannot get the column information from OLE DB provider "MSDASQL"

If you tried to do a query with them. You can however see all the tables via the linked server tab.

Continue reading "SQL Server 64-bit Linked Server woes"

Saturday, February 19. 2011

Printer Friendly

QuestionYou have a system of products and categories and you want a product to be allowed to be in multiple categories, but you want a product to only be allowed to be in one main category.

How do you enforce this rule in the database?

Some people will say -- why can't you just deal with this in your application logic. Our general reason is that much of our updating doesn't happen at our application level. We like enforcing rules at the database

level because it saves us from ourselves. We are in the business of massaging data. For this particular kind of example we wanted to make sure the database would provide us a nice safety net so that

we wouldn't accidentally assign a product in two main categories.

Answer

There are two approaches we thought of. One is the obvious have a primary category column and a bridge table that has secondary categories. That is an ugly solution because when you do a query you have to do a union

and always treat the secondary categories as different from the main. For most use-cases we don't usually care about distinguisihing primary from secondary category.

The solution we finally settled on was to have one bridge table with a boolean field for if its the main category. We enforce the only one main category requirement using a partial index. Now not all databases support partial indexes

This is one major value of using PostgreSQL that you have so many more options for implementing logic.

As some people noted in the comments and the reddit entry. SQL Server 2008 has a similar feature called Filtered Indexes. Though PostgreSQL has had partial indexes for as far back as I can remember. This brings up an interesting point which I have observed -- if you were using PostgreSQL before, you would already know how to use the Filtered Indexes, Multi row inserts introduced in SQL Server 2008, and the SEQUENCES feature coming in SQL Server 2010. So we should all use PostgreSQL, because it teaches us how to use the newer versions of SQL Server before they come out. :)

So how does the partial index solution look: NOTE for simplicity, we are leaving out all the complimentary tables and the foreign key constraints that we also have in place.

CREATE TABLE products_categories

(

category_id integer NOT NULL,

product_id integer NOT NULL,

main boolean NOT NULL DEFAULT false,

orderby integer NOT NULL DEFAULT 0,

CONSTRAINT products_categories_pkey PRIMARY KEY (category_id, product_id)

);

CREATE UNIQUE INDEX idx_products_categories_primary

ON products_categories

USING btree

(product_id)

WHERE main = true;

Testing it out. It saves us and gives us a nice informative message to boot.

INSERT INTO products_categories(category_id, product_id, main)

VALUES (1,2,true), (3,2,false), (3,3,true), (4,2,true);

ERROR: duplicate key value violates unique constraint "idx_products_categories_primary"

DETAIL: Key (product_id)=(2) already exists.

Monday, January 31. 2011

Printer Friendly

We are on the final stretch of our book writing adventure. All the chapters are done and more or less finalized. We are now going over the proofs of the chapters making last minute corrections before print. Hopefully we'll

see the printed version before end February. It's been a long 1.5+ years. I was really hoping we'd be published before Leo's 40th, but his 40th came and went. Though looks like we'll make it before mine with 5 - 6 months to spare.

On the bright side, I guess if we write a book again, we'll know what to expect.

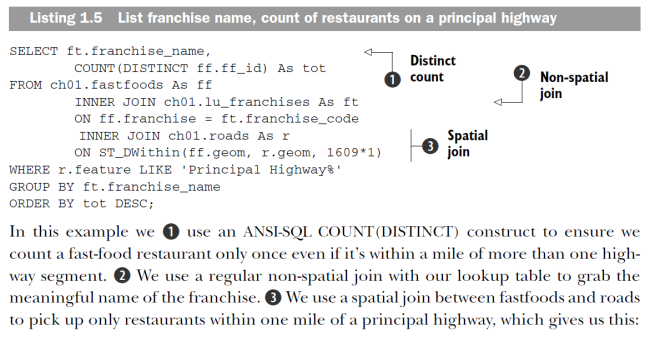

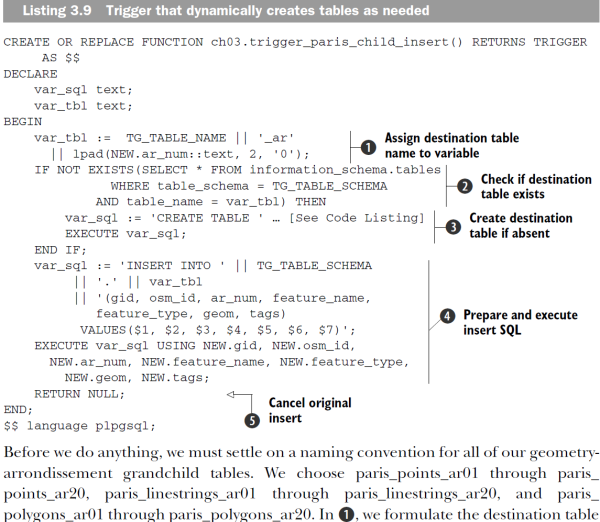

I really love the Manning code annotation style. Here are some snapshots of some from PostGIS in Action. We have just the black and white prints of some of the chapters so we can make sure the printed figures will look okay.

The e-book version will be in color, but sadly the printed will be in black and white.

In February, we'll be speaking in 2011 North Carolina Geographic Information Systems Conference, Raleigh, NC USA and visiting a long-time friend from our college days:

- PostGIS 2.0 Raster and 3D Support Enhancements (GIS Goes 3D Special Track) Friday February 18th 8:30 - 10:00 AM

- Cross Comparison of Spatially Enabled Databases: PostGIS, SQL Server and JAvaSPAtial (JASPA) (GeoJenga How to Stack your Apps Track) - Friday February 18th 10:30 - 12:00 PM

Tuesday, January 18. 2011

Printer Friendly

In our last article we talked about String Aggregation implementing in PostgreSQL, SQL Server, and MySQL. This task is one that makes purist relational database programmers

a bit squeamish. In this article we'll talk about the reverse of that, how do you deal with data that someone hands you delimeted in a single field and that you are asked to explode or re-sort based on some lookup table.

What are the benefits of having a structure such as? : p_name | activities

--------+--------------------------------

Dopey | Tumbling

Humpty | Cracking;Seating;Tumbling

Jack | Fishing;Hiking;Skiing

Jill | Bear Hunting;Hiking

Well for the casual programmer or simple text file database that knows nothing about JOINS and so forth, it makes it simple to pull a list of people who like Tumbling.

You simply do a WHERE ';' || activities || ';' LIKE '%;Tumbling;%'. It's great for security too because you can determine security with a simple like check and also list all the security groups a member belongs in without doing anything.

Quite easy for even the least data-skilled of programmers to work with because most procedural languages have a split function that can easily parse these into an array useful for stuffing into drop down lists and so forth. As a consultant of semi-techie people

I'm often torn by the dilemma of "What is the way I would program for myself vs. the way that provides the most autonomy to the client". By that I mean

for example I try to avoid heavy-weight things like Wizards that add additional bloated dependencies or slow the speed down of an application. These bloated dependencies may provide ease to the client but make my debugging life harder. So I weight the options

and figure out which way works now and also provides me an easy escape route should things like speed or complexity become more of an issue.

This brings us to the topic of, what is wrong with this model? It can be slow because the LIKE condition you have can't easily take advantage of an index unless using a full text index so not ideal where this is the primary filtering factor. It's also prone to pollution because

you can't easily validate that the values in the field are in your valid set of lookups or if your lookup changes, the text can be forced to change with a CASCADE UPDATE/DELETE RULE etc. In cases where this is of minor consequence

which is many if referential integrity is not high on your list of requirements, this design is not bad. It might make a purist throw up but oh well there is always dramamine to fall back on. As long as you have done your cost benefit analysis, I don't think there should be any shame of following this

less than respected route.

While you may despise this model, it has its place and it's a fact of life that one day someone will hand it to you and you may need to flip it around a bit. We shall demonstrate how to do that in this article.

Continue reading "Reverse String Aggregation: Explode concatenated data into separate rows In PostgreSQL, SQL Server and MySQL"

Tuesday, December 28. 2010

Printer Friendly

In a prior article we did a review of PostgreSQL 9 Admin Cookbook, by Simon Riggs and Hannu Krosing. In this article

we'll take a look at the companion book PostgreSQL 9 High Performance by Greg Smith.

In a prior article we did a review of PostgreSQL 9 Admin Cookbook, by Simon Riggs and Hannu Krosing. In this article

we'll take a look at the companion book PostgreSQL 9 High Performance by Greg Smith.

Both books are published by Packt Publishing and can be bought directly from Packt Publishing or via Amazon. Packt is currently running a 50% off sale if you

buy both books (e-Book version) directly from Packt. In addition Packt offers free shipping for US, UK, Europe and select Asian countries.

For starters: The PostgreSQL 9 High Performance book is a more advanced book than the PostgreSQL 9 Admin Cookbook and is more of a sit-down book. At about 450 pages, it's a bit longer than the PostgreSQL Admin Cookbook. Unlike the PostgreSQL 9 Admin Cookbook, it is more a concepts book and much less of a cookbook.

It's not a book you would pick up if you are new to databases and trying to feel your way thru PostgreSQL, however if you feel comfortable with databases in general, not specific

to PostgreSQL and are trying to eek out the most performance you can it's a handy book. What surprised me most about this book was how much of it is not specific to PostgreSQL, but in fact hardware considerations that are pertinent to most relational databases.

In fact Greg Smith, starts the book off with a fairly

shocking statement in the section entitled PostgreSQL or another database? There are certainly situations where other database solutions will perform better. Those are words you will rarely hear from die-hard PostgreSQL users, bent on defending their database

of choice against all criticism and framing PostgreSQL as the tool that will solve famine, bring world peace, and cure cancer if only everyone would stop using that other thing and use PostgreSQL instead:).

That in my mind, made this book more of a trustworthy reference if you came from some other DBMS, and wanted to know if PostgreSQL could meet your needs comparably or better than what you were using before.

In a nutshell, if I were to contrast and compare the PostgreSQL 9 Admin Cookbook vs. PostgreSQL High Performance, I would say the Cookbook is a much lighter read focused on getting familiar with and getting the most out of the software (PostgreSQL), and PostgreSQL High Perofrmance is focused

on getting the most out of your hardware and pushing your hardware to its limits to work with PostgreSQL. There is very little overlap of content between the two and as you take on more sophisticated projects, you'll definitely want both books on your shelf. The PostgreSQL 9 High Perofrmance book isn't going to teach you

too much about writing better queries,day to day management, or how to load data etc, but it will tell you how to determine when your database is under stress or your hardware is about to kick the bucket and what is causing that stress. It's definitely a book you want to have if you plan to run large PostgreSQL databases or a high traffic

site with PostgreSQL.

PostgreSQL 9 High Performance is roughly about 25% hardware and how to choose the best hardware for your budget, 40% in-depth details about how PostgreSQL works with your hardware and trade-offs made by PostgreSQL developers to get a healthy balance of performance vs. reliability, and another 35% about various useful monitoring

tools for PostgreSQL performance and general hardware performance. Its focus is mostly on Linux/Unix, which is not surprising since most production PostgreSQL installs are on Linux/Unix. That said there is some coverage of windows

such as FAT32/NTFS discussion and considerations when deploying terabyte size databases on Windows and issues with shared memory on Windows.

Full disclosure: I got a free e-Book copy of this book just as I did with PostgreSQL 9 Admin Cookbook.

Continue reading "PostgreSQL 9 High Performance Book Review"

Friday, December 24. 2010

Printer Friendly

Question: You have a table of people and a table that specifies the activities each person is involved

in. You want to return a result that has one record per person and a column that has a listing of activities for each person

separated by semicolons and alphabetically sorted by activity. You also want the whole set alphabetically sorted by person's name.

This is a question we are always asked and since we mentor on various flavors of databases,

we need to be able to switch gears and provide an answer that works on the client's database. Most

often the additional requirement is that you can't install new functions in the database. This means that

for PostgreSQL/SQL Server that both support defining custom aggregates, that is out as an option.

Normally we try to come up with an answer that works in most databases, but sadly the only solution that works in

most is to push the problem off to the client front end and throw up your hands and proclaim -- "This ain't something that should be

done in the database and is a reporting problem." That is in fact what many database purists do, and all I can say to them is wake up and smell the coffee before you are out of a job.

We feel that data

transformation is an important function of a database, and if your database is incapable of massaging the data into a format

your various client apps can easily digest, WELL THAT's A PROBLEM.

We shall now document this answer rather than trying to answer for the nteenth time. For starter's

PostgreSQL has a lot of answers to this question, probably more so than any other, though some are easier to execute than others

and many depend on the version of PostgreSQL you are using. SQL Server has 2 classes of answers neither of which is terribly appealing,

but we'll go over the ones that don't require you to be able to install .NET stored functions in your database since we said that is often a requirement.

MySQL has a fairly

simple, elegant and very portable way that it has had for a really long time.

Continue reading "String Aggregation in PostgreSQL, SQL Server, and MySQL"

Monday, December 20. 2010

Printer Friendly

In Part 2 of PL/R we covered how to build PL/R functions that take arrays and output textual outputs of generated R objects. We then used this in an aggregate SQL query using array_agg. Often when you are building PL/R functions

you'll have R functions that you want to reuse many times either inside a single PL/R function or across various PL/R functions.

Unfortunately, if you wanted to call a PL/R function from another PL/R function, this is not possible unless you are doing it from spi.execute call.

There is another way to embed reusable R code in a PostgreSQL database.

In order to be able to share databases stored R code across various PL/R functions, PL/R has a feature called a plr_module. In this tutorial

we'll learn how to create and register shareable R functions with plr_module. In the next part of this series we'll start to explore generating graphs with PL/R.

Continue reading "PL/R Part 3: Sharing Functions across PL/R functions with plr_module"

Friday, December 10. 2010

Printer Friendly

In Intro to PL/R and R, we covered how to enable PL/R language in the database and wrote some PL/R functions

that rendered plain text reports using the R environment. What makes combining R and PostgreSQL in

PL/R most powerful is when you can start writing SQL summary queries that use R functions like any other SQL function.

In this next example, we'll be using PostGIS test runs from tests we autogenerated from the Official PostGIS documentation (Documentation Driven Testing (DDT))

as described in the Garden Test section of the PostGIS Developer wiki.

We've also updated some of our logging generator and test patterns so future results may not represent what we demonstrated in the last article.

On a side note: Among the changes in the tests was to introduce more variants of the Empty Geometry now supported by PostGIS 2.0.

Our beloved PostGIS 2.0 trunk is at the moment somewhat unstable when working with these new forms of emptiness and stuffing geometries in inappropriate places. At the moment it doesn't survive through the mindless machine gun battery of tests we have mercilessly inflicted.

It's been great fun trying to build a better dummy while watching Paul run around patching holes to make the software more dummy proof as the dummy stumbles across questionable but amusing PostGIS use cases not gracefully handled by his new serialization and empty logic.

On yet another side note, it's nice to

see that others are doing similar wonderful things with documentation. Check out Euler's comment on catalog tables

where he uses the PostgreSQL SGML documentation to autogenerate PostgreSQL catalog table comments using OpenJade's OSX to convert the SGML to XML and then XSL similar to what we did with PostGIS documentation to autogenerate PostGIS function/type comments and as a platform

for our test generator.

For our next exercises we'll be using the power of aggregation to push data into R instead of pg.spi.execute. This will make our functions far more reusable and versatile.

Continue reading "PL/R Part 2: Functions that take arguments and the power of aggregation"

Sunday, November 28. 2010

Printer Friendly

In this article we'll provide a summary of what PL/R is and how to get running with it. Since we don't like repeating ourselves,

we'll refer you to an article we wrote a while ago which is still fairly relevant today called Up and Running with PL/R (PLR) in PostgreSQL: An almost Idiot's Guide

and just fill in the parts that have changed. We should note that particular series was more geared toward the spatial database programmer (PostGIS in particular). There is a lot of overlap between the PL/R, R, and PostGIS user-base which is comprised

of many environmental scientists and researchers in need of powerful charting and stats tools to analyse their data who are high on the smart but low on the money human spectrum.

This series will be more of a general PL/R user perspective. We'll follow more of the same style we did with Quick Intro to PL/Python. We'll end our series with a PL/R cheatsheet similar to what we had for PL/Python.

As stated in our State of PostGIS article, we'll be using log files we generated from our PostGIS stress tests. These stress tests were auto-generated from the PostGIS official documentation.

The raster tests are comprised of 2,095 query executions exercising all the pixel types supported. The geometry/geograpy tests are comprised of 65,892 spatial SQL queries exercising every PostGIS geometry/geography supported in PostGIS 2.0 -- yes this includes TINS, Triangles,Polyhedral Surfaces, Curved geometries and all dimensions of them.

Most queries are unique. If you are curious to see what these log tables look like or want to follow along with these exercises, you can download the tables from here.

What is R and PL/R and why should you care?

R is both a language and an environment for doing statistics and generating graphs and plots. It is GNU-licensed and a common favorite of Universities and Research institutions. PL/R is a procedural language for PostgreSQL that allows you to write database stored functions

in R. R is a set-based and domain specific language similar to SQL except unlike the way relational databases treat data, it thinks of data as matrices, lists and vectors. I tend to think of it as a cross between LISP and SQL though more experienced Lisp and R users will probably disagree with me on that. This makes it easier in many cases to tabulate data both across columns as well as across rows.

The examples we will show in these exercises, could be done in SQL, but they are much more succinct to write in R. In addition to the language itself, there are a whole wealth of statistical and graphing functions available in R that you will not

find in any relational database. These functions are growing as more people contribute packages. Its packaging system called Comprehensive R Archive (CRAN) is similar in concept to Perl's CPAN and the in the works PGXN for PostgreSQL.

Continue reading "Quick Intro to R and PL/R - Part 1"

Tuesday, November 23. 2010

Printer Friendly

I've always enjoyed dismantling things. Deconstruction was a good way of analyzing how things were built by cataloging all the ways

I could dismantle or destroy them. I experimented with mechanical systems, electrical circuitry, chemicals and biological systems sometimes coming close to bodily harm. In later years I decided to play it safe and just stick with programming and computer simulation

as a convenient channel to enjoy my destructive pursuits.

Now getting to the point of this article.

In later articles, I'll start to demonstrate the use of PL/R, the procedural language for PostgreSQL that allows you to program functions in the statistical language and Environment R. To

make these examples more useful, I'll be analyzing data generated from PostGIS tests I've been working on for stress testing the upcoming PostGIS 2.0. PostGIS 2.0 is a major

and probably the most exciting release for us. Paul Ramsey did a summary talk recently of Past, Present, Future of PostGIS at State of PostGIS FOSS4G Japan http://www.ustream.tv/recorded/10667125

which provides a brief glimpse of what's in store in 2.0.

Continue reading "The State of PostGIS, Joys of Testing, and PLR the Prequel"

Sunday, November 21. 2010

Printer Friendly

Backup and Restore is probably the most important thing to know how to do when you have a database with data you care about.

The utilities in PostgreSQL that accomplish these tasks are pg_restore, pg_dump, pg_dumpall, and for restore of plain text dumps - psql.

A lot of the switches used by pg_dump, pg_restore, pg_dumpall are common to all three. You use pg_dump to do backups of a single database or select database objects and pg_restore to restore it either to another database or to recover portions of a database. You use pg_dumpall to dump all your databases in plain text format.

Rather than trying to keep track of which switch works with which, we decided to combine all into a single cheat sheet with a column denoting which utility the switch is supported in.

Pretty much all the text is compiled from the --help switch of each.

We created a similar Backup and Restore cheatsheet for PostgreSQL 8.3 and since then some new features have been added such as the jobs parallel restore feature in 8.4. We have now created an updated sheet to comprise all features present in PostgreSQL 9.0 packaged pg_dump, pg_restore, pg_dumpall command line utilities.

PDF Portrait version 8.5 x 11" of this cheatsheet is available at PostgreSQL 9.0 Dump Restore 8.5 x 11 and also available in

PDF A4 format and HTML.

As usual please let us know if you find any errors or omissions and we'll be happy to correct.

Sunday, November 14. 2010

Printer Friendly

Many people have been concerned with Oracle's stewardship of past Sun Microsystems open source projects.

There are Java, MySQL, OpenSolaris to name a few.

Why are people concerned? Perhaps the abandoning of projects such as OpenSolaris, the suing of Google over Java infringements, the marshalling out of many frontline contributors of core Open Source projects from Oracle, the idea of forking over license rights to a single company so they can relicense your code.

We have no idea.

All we know is that there is an awful lot of forking going on.

To Oracle's defense, many do feel that they have done a good job with progressing the advancements of some of the Open Source projects they have shepherded.

For example getting MySQL patches more quickly in place etc. For some projects where there is not much of a monetary incentive, many feel they have at best neglected e.g. OpenSolaris.

Perhaps it's more Oracle's size and the size that Sun was before takeover that has made people take notice that no Open Source project

is in stable hands when its ecosystem is predominantly controlled by the whims of one big gorilla.

One new fork we were quite interested to hear about is LibreOffice, which is a fork of OpenOffice.

In addition to the fork, there is a new organization

called Document Foundation to cradle the new project. Document Foundation is backed by many OpenOffice developers and corporate entities (Google, Novell,Canonical) to name a few.

The Document Foundation mission statement is outlined here. There is even a document foundation planet for LibreOfficerians to call home.

The LibreOffice starter screen looks similar to the OpenOffice starter screen,  except instead of the flashy Oracle logo we have come to love and fear, it has a simple text Document Foundation below the basic multi-colored Libre Office title. Much the same tools

found in OpenOffice are present. The project has not forked too much in a user-centric way from its OpenOffice ancestor yet. The main changes so far are the promise of not having to hand over license assignment rights to a single company as described in

LibreOffice - A fresh page for OpenOffice as well as some general cleanup and introduction of plugins that had copy assignment issues such as some from RedHat and Go-OO. My favorite quote

listed in the above article is It feels like Oracle is "a mother who loves her child but is not aware that her child wants to walk alone." by André Schnabel. So perhaps Oracle's greatest contribution and legacy to Open Source and perhaps the biggest that any for-profit company

can make for an Open Source project is to force its offspring to grow feet to walk away. except instead of the flashy Oracle logo we have come to love and fear, it has a simple text Document Foundation below the basic multi-colored Libre Office title. Much the same tools

found in OpenOffice are present. The project has not forked too much in a user-centric way from its OpenOffice ancestor yet. The main changes so far are the promise of not having to hand over license assignment rights to a single company as described in

LibreOffice - A fresh page for OpenOffice as well as some general cleanup and introduction of plugins that had copy assignment issues such as some from RedHat and Go-OO. My favorite quote

listed in the above article is It feels like Oracle is "a mother who loves her child but is not aware that her child wants to walk alone." by André Schnabel. So perhaps Oracle's greatest contribution and legacy to Open Source and perhaps the biggest that any for-profit company

can make for an Open Source project is to force its offspring to grow feet to walk away.

In later posts we'll test drive Libreoffice with PostgreSQL to see how it compares to its OO ancestor and what additional surprises it has in store.

Though in future if Oracle does donate the trademark Openoffice name to the foundation, then

LibreOffice may go back to being called OpenOffice. Personally I like LibreOffice better and the fact that the name change signals a change in governance.

|