Friday, February 17. 2012

Printer Friendly

I was excited to learn from Pasha Golub's blog LibreOffice Base 3.5 now comes packaged with native PostgreSQL driver so no separate configuration is required.

The connection string syntax follows the old SBC native driver of prior OpenOffice versions we itemized in Using OpenOffice Base with PostgeSQL.

What I really wanted to do with it is experiment with its graphical rendering capabilities. As discussed in PSQL needs a better way of outputting bytea

one of the frequently asked questions on the PostGIS list by folks using the new not yet officially released (alpha5 recently released) functionality in PostGIS 2.0 is how to render rasters

with common variety tools. I suspected Base was a capable option, but had never tested it to confirm. Since I was installing new LibreOffice 3.5, I thought this might be a good test of its metal.

Continue reading "Rendering PostGIS Raster graphics with LibreOffice Base Reports"

Tuesday, February 14. 2012

Printer Friendly

Have you ever tried to output a file stored in a bytea column from PostgreSQL using PSQL? It ain't pretty. Andrew Dunstan

has a couple of examples of doing this in his article Clever Trick Challenge. This issue has now become very relevant for PostGIS raster use. The reason being is that now that PostGIS in it's 2.0 encarnation can do clipping of rasters, unions of rasters,

map algebra, convert geometries to rasters and convert those rasters to various imagery type file formats, people naturally assume it should be trivial

to output your new fangled raster query as a jpeg or png etc via, PSQL. Turns out it is not. For those who need to, I've documented an example using

one suggestion from Andrew's blog which utilizes the Large object support built into PostgreSQL. It would be nice if this were simpler.

I chose this approach because it was the only one that didn't assume a user has Perl installed or is on a Unix system so should work fairly much the same regardless the client OS in use. You can find the example in Outputting Rasters with PSQL. Suggestions on how to improve on this are welcome.

Sunday, February 05. 2012

Printer Friendly

As many may have noticed, PostgresOnline.com has been down for the past week or so and probably is still not reachable from many parts of the world since our DNS server was also taken down as a result of a Distributed Denial of Service (DDOS) attack instigated by an Activision Call of Duty Game exploit that turned thousands of Call of Duty game servers into Zombies launching an attack on us.

We have a small confession to make. One of the businesses we co-own is an e-Commerce site that sells condoms. You never know how people will react when you say that in mixed company so we only mention it in closer company. Some people are glad we are in a business protecting against venereal diseases or unwanted pregnancies and some feel strongly we are violating a mother nature creed of conduct. WowCondoms was the site that was under attack on a UDP port and we are not sure if it was a malicious intent or not since the root instigator has not been found yet. The attack was higher up from our servers so it knocked our ISP who in turn blamed us for their outage. We never saw the traffic.

The tragic thing is that it can happen to any site and does all the time. It really hit home when it happened to us.

Details of our fight are described here: WowCondoms plugs hole in Activision's Call of Duty Game Servers

Wednesday, January 25. 2012

Printer Friendly

PostgreSQL has aggregate functions called bool_and and bool_or which it's had for as far back as I can remember.

What do they do? given rows of premises (things that resolve to booleans), bool_and will return true if all of the premises are true. Similarly bool_or

will return true if any of the premises in the set of rows is true. What if however your boolean expressions are not in rows, but instead passed in

as a sequence of arbitrary statements of questionable fact. We want a function like bool_or or bool_and that takes an arbitrary number of boolean arguments. Are there functions

that fit the bill. Indeed there are, but they don't scream out and say I work with booleans because they fit into a class of function we

discussed in The wonders of Any Element and that also happen to be variadic functions.

These are none other than

greatest and least and they are old timer functions that you can find in most versions of PostgreSQL. We'll demonstrate how to use

all 4 with booleans in this article. It must be said that greatest and least are much more useful when applied to other data types like dates

and numbers, but we were amused at the parallel with booleans.

Side note: we've started to write our book on PostgreSQL that will be published by O'Reilly. We hope to finish this book within the next 5-7 months but have preliminary e-Book drafts before then for early purchasers to scan.

The focus of the book will be PostgreSQL 9.1 with some highlights the upcoming PostgreSQL 9.2. Of course oldie but goodie topics are in the book too. It's a thrill to be writing again.

Continue reading "True or False every which way"

Monday, January 16. 2012

Printer Friendly

If I could name a number one feature I love most about PostgreSQL, it's the table inheritance feature which we described in How to Inherit and Uninherit. A lot of people use it for table partitioning using CONSTRAINT EXCLUSION. Aside from that, in combination with PostgreSQL schema search_path (customizable by user and/or database) it makes for a very flexible abstraction tool. For example, for many of our web apps that service many departments where each department/client wants to keep a high level of autonomy, we have a schema set aside for each

that inherits from a master template schema. Each department site uses a different set of accounts with the primary schema being that of the department/client so that they are hitting their own tables.

Inheritance allows us to keep data separate,do roll-up reports if we need to, use the same application front-end, and yet allows us the ability to add new columns in just one place (the master template schema). It is more flexible than other approaches because for example we may have a city organization that need to share tables, like for example a system loaded list of funding source shared across the agency. We can set aside these shared tables in a separate schema visible to all or have some have their own copy they can change if they don't want to use the shared one.

Every once in a while, we find ourselves needing to query the whole hierarchy and needing to know which table the results of the query are coming from. To help

solve that issue, we employ the use of the system column tableoid which all user tables have. The tableoid is the the object id of a table. PostgreSQL has many system columns that you have to explicitly select

and can't be accessed with a SELECT * with the tableoid being one of them. These are: tableoid, cmax,cmin, xmin,xmax,ctid which are all described in System Columns. The PostgreSQL docs on inheritance have examples of using it, but we thought it worthwile to repeat the exercise since it's not that common knowledge and is unique enough feature of PostgreSQL that others coming from other relational databases, may miss the treat. I've often demonstrated

it to non-PostgreSQL users who use for example SQL Server or MySQL, and they literally fall out of their chair when I show the feature to them and its endless possibilities.

Continue reading "Table Inheritance and the tableoid"

Sunday, January 08. 2012

Printer Friendly

PostgreSQL has this interesting placeholder called anyelement which it has had for a long time and its complement anyarray. They are used when you want to define a function that can handle many types arguments or can output many types of outputs. They are particularly useful for defining aggregates, which we demonstrated in

Who's on First and Who's on Last and several other aggregate articles.

Anyelement / anyarray can be used just as conveniently in other functions. The main gotcha is that when you pass in the first anyelement/anyarray all subsequent anyelement / anyarray must match the same data type as the first anyelement / anyarray.

Continue reading "The wonders of Any Element"

Wednesday, December 28. 2011

Printer Friendly

For those who aren't familiar with hstore, it's a key/value

storage type that is packaged as an extension or contrib in PostgreSQL 8.2+. In PostgreSQL 9.0 it got a little extra loving in several ways one of which was the introduction

of the hstore(record) casting function that converts a record to an hstore. In this article, I'll demonstrate how you can use this new casting function to do very sleek mail merges right in the database. The only caveat is that it seems to only correctly name the keys if it is fed a real table or view. Derived queries such as aggregates etc get keys named f1, f2, etc.

If you are on PostgreSQL 9.1 or above installing -- hstore is just a CREATE EXTENSION hstore; sql command away. If you are on a lower version of PostgreSQL,

you can usually find the hstore.sql in share/contribs.

Continue reading "Mail Merging using Hstore"

Wednesday, December 14. 2011

Printer Friendly

Two exciting things happened this past month.

- Our article on upcoming PostGIS 2.0 recently came out in GeoInformatics Magazine December 2011 Issue 8. Check it out here. Starts on page 30.

- We just got notice that our book proposal has been accepted and this time it's not about PostGIS.

Continue reading "GeoInformatics article and new book in the works"

Sunday, December 11. 2011

Printer Friendly

In our article The Pure Relational database is dead there

were a lot of misunderstandings as a result of our poor choice of words. People thought we were bashing the relational model because in their mind that was what

pure meant. I got hit with a lot of poetic insults. I still can't think of an alternative word to use for what I meant. Simple doesn't really do it as even relational databases with just standard types

were far from simple when you consider the planner and all the other stuff going on under the hood to protect you from the underlying storage structure. What I was trying to say is that in the beginning most relational databases

just supported a standard set of types which you could not expand on and most people when they think relational today still think just that. That type of relational database is in my book dead or almost dead.

How did this all start. Well whenever we use something like PostgreSQL to store anything complex -- take your pick: geometry data, tree like structures which we use

ltree for, full-text query constructs, and Yes XML we get bashed by some know-it-all who has a very narrow view of what a relational database should be doing and suggesting we use a NoSQL database, a graph engine or a full text engine or normalize our data more. I have also learned XML is a dirty word to many people. I mistakenly thought XML was a complex type people could

relate to, but turns out they can relate to it so well that it brings up tragic memories I can only equate to Post Traumatic Stress Disorder suffered by war veterans or (early or wrong) technology adopters. That was not

my intent either. XML was just merely an example. I will not say you should use XML in your tables, but I will also not say you should stay clear of it as many people wanted me to say. I will say its use is rare, but it has its place. It has its place just as any other complex type and it has its own special needs for navigation, indexing etc. which many relational databases handle fine enough.

Continue reading "The Relational Model is very much alive"

Sunday, December 04. 2011

Printer Friendly

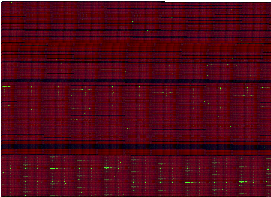

What happens when you take a bunch of auto-generated tests and test results

which you burn into rasters with SQL?

You get a code fingerprint of sorts. Well that was the dream I wanted to explore. Now that PostGIS 2.0 has introduced a new spatial type called raster

and lots of functionality including some very cool Map Algebra functionality to go with it,

this is now possible without too much fuss. My first attempt at it didn't produce quite what I expected. I have to admit it is absolutely gorgeous

and will be hopefully useful once I figure out how to read it, tweak the rendering process to achieve more of a breakout of results, or patent it as a fabric style.

Now for the story of how a computer nerd is born and how rasters can be useful to nerds.

Continue reading "Code Profiling with PostGIS raster"

Saturday, December 03. 2011

Printer Friendly

A lot of redditers took offense at our article XPathing XML data with PostgreSQL

with the general consensus, if you are going to be stuffing XML in a relational database where will you stop? That is not what relational databases are designed for.

We had comitted a sacrilegious sin and worsed yet encouraging bad habits by forcing people to think more about different options they have for storing data in a relational database and god forbid demonstrating querying such columns with xml specific functions. What were we thinking? How dare we try to query XML data with SQL? Perhaps we were thinking like this guy or this guy,

both equally misguided spatial relational database folk.

Of course we stepped one foot further by actually defining a column as xml and dare storing data in it for later consumption rather than just an intermediary step.

If I want to store documents, that are navigateable I should be using a document database like MongoDb, CouchDB etc designed for that kind of stuff. If I've got graphs I should be using a graph database. This got me thinking

that the "Pure Relational Database" is dead, and I'm surprised most people don't seem to realize it.

So while "Relational databases" have changed over the last 25 years, most people's notions of them have not kept up with the pace of its change.

First let me define what I mean by Pure. A pure relational database is one with standard meat and potato types like text, dates, numbers well suited for counting money and computing how close the world is to total bankruptcy which you store as fields in a row of a table and that you then define foreign keys / constraints / primary keys on to relate them to other tables.

You reconstitute your real world objects by stitching these tables together with joins etc and return sets using where conditions, summarize by using group bys or other mathy like constructs. Don't get me wrong; these are very beautiful things because they allow for easy slicing of dimensions and not having to think about all the dimensions that make up an object all at once. In fact it was so beautiful that some people thought, "wow that's cool, but it would be even cooler if I could

store more complex objects in those columns with their own specific needs for querying." and so was born the object relational database as some people refer to them that thought relational but also understood that different types had their own unique needs for querying, storage, indexing etc.

Nowadays most, if not all, relational like databases have standardized on some variant of SQL.

In essence though, the pure relational database doesn't allow you to define new types or have exotic types such as arrays, xml, graphs, geometries, rasters, sparse matrices etc.

Much less thinking involved and less likely you will shoot yourself in the foot by dumping a bunch of xml in a field and trying to do something with it.

When it is used to store more complex things such as spreadsheets and other user documents, these are stored as blobs and just retrieved. Even such use is frowned upon.

Well most relational databases I can think of nowadays have richer types: e.g. PostgreSQL, Oracle and Firebird all support arrays as a column type. Some even allow you to define custom types and functions to support your custom types e.g. PostgreSQL (I could go on forever), Oracle has rich user defined type support too, and SQL Server 2005+ with each version getting better and better for user defined custom types and introducing more exotic types and support infrastructure. Even MySQL/Drizzle (mostly in the form of different storage engines). Even my favorite light-weight SQLite under the hood has some tricks that aren't what I would call relational. E.g. Spatialite/RasterLite has a whole geometry type library built on SQLite with functions you can call from SQL and I'm sure there are lots of middleware tools you don't know about

using the SQLite and Firebird engine for more than relational tasks (e.g. HTML5 anyone/ CAD anyone).

Monday, November 21. 2011

Printer Friendly

In this day and age of clouds and what not, it is still important to keep tabs on your database growth to ensure it doesn't

outgrow your disks or so that you can be prepared when it does. A common question that is asked is how big your database is or all your databases combined.

Luckily PostgreSQL has had for a long time functions to help you answer this easily. In this little article,

I'll demonstrate how to answer these questions and more with some PostgreSQL built-in functions

Continue reading "How big is my database and my other stuff"

Friday, November 11. 2011

Printer Friendly

One of my favorite tools and I think that of many folks working with GIS and other kinds of Multimedia is the GDAL/OGR suite.

Though I started using it to conquer GIS ETL activities, I found myself using it for problems that are inherently not GIS at all. I talked

about the GDAL OGR2OGR component a while ago in GDAL OGR2OGR for Data Loading

and this time I'll talk tangentially about its raster capabilities. It is a fantastic tool for converting between various raster formats and applying various raster operations.

In PostGIS world the new 2.0 raster functionality puts an SQL wrapper around much of its power. I'm not going to talk about that though except as a fleeting comment to explore later (we've got cool 2 band Map Algebra in PostGIS 2.0 to flaunt its stuff).

So what does this have to do with XPathing XML data with PostgreSQL? Well that's what I'm going to talk about what to do with machine generated data that comes at you in XML format.

A lot of machine generated data is hitting us in an XML like form. I talked about GPX data and navigating that in Which by the way GDAL/OGR can load and export easily into/out of a PostGIS enabled database.

GDAL exposes another kind of machine generated data in XML format which turns out to be very useful for all kinds of things. This is Exchangeable image file format (EXIF) data. There are all kinds

of random text information embedded in pictures and this varies depending on what camera is taking it. Newer cameras like the ones you have built into your iphone or android

embed location based information into them sometimes like where you were standing when you took the picture. Most cameras these days embed the time the picture was taken.

This information is important because if you are taking electronic notes while you are snapping your pictures, it provides an easy way to match up your notes with the picture about the object. So what does this EXIF info look like when you point GDAL at it? We'll see.

Continue reading "XPathing XML data with PostgreSQL"

Thursday, November 03. 2011

Printer Friendly

As mentioned in Lessons Learned Packaging PostGIS extensions, I am working on PostGIS 2.0.0 extension packaging. One of the things I wanted to know was what objects, types, functions etc were installed by my extension. The new packaging system allows for cataloging this relatively easily, but I couldn't find a function or view for this and didn't see one mentioned in the manual, so I created this query which seems to work pretty well as far as I can tell. The basic idea being that any object that an extension depends on

that is not an extension is part of the extension package.

SELECT c.relname As item_type,

COALESCE(proc.proname,typ.typname, cd.relname, op.oprname,

'CAST(' || cs.typname || ' AS ' || ct.typname || ') ', opcname, opfname) As item_name,

COALESCE(proc.proisagg,false) As is_agg, oidvectortypes(proc.proargtypes) As arg_types

FROM pg_depend As d INNER JOIN pg_extension As e

ON d.refobjid = e.oid INNER JOIN pg_class As c ON

c.oid = d.classid

LEFT JOIN pg_proc AS proc ON proc.oid = d.objid

LEFT JOIN pg_type AS typ ON typ.oid = d.objid

LEFT JOIN pg_class As cd ON cd.oid = d.objid

LEFT JOIN pg_operator As op ON op.oid = d.objid

LEFT JOIN pg_cast AS ca ON ca.oid = d.objid

LEFT JOIN pg_type AS cs ON ca.castsource = cs.oid

LEFT JOIN pg_type AS ct ON ca.casttarget = ct.oid

LEFT JOIN pg_opclass As oc ON oc.oid = d.objid

LEFT JOIN pg_opfamily As ofa ON ofa.oid = d.objid

WHERE d.deptype = 'e' and e.extname = 'postgis'

ORDER BY item_type, item_name;

The output looks like:

Continue reading "What objects are in a PostgreSQL installed extension"

Tuesday, November 01. 2011

Printer Friendly

Ever have the need to create a holding table say spreadsheet data with say 100 columns. You need to create a table to hold this stuff. Or perhaps you were feeling in a sadist mood and wanted to abuse your PostgreSQL database to see how many columns you can create in a table of a specific data type.

Here is a quick script to do it:

SELECT 'CREATE TABLE data_import('

|| array_to_string(array_agg('field' || i::text || ' varchar(255)'), ',') || ');'

FROM generate_series(1,10) As i;

SELECT 'CREATE TABLE data_import('

|| string_agg('field' || i::text || ' varchar(255)', ',') || ');'

FROM generate_series(1,10) As i;

Both variants will return output that looks like this:

CREATE TABLE data_import(field1 varchar(255),field2 varchar(255),field3 varchar(255),field4 varchar(255)

,field5 varchar(255),field6 varchar(255),field7 varchar(255)

,field8 varchar(255),field9 varchar(255),field10 varchar(255));

Now if you want it to also execute because you are running it as part of an sql script, you could wrap it in an anonymous function.

DO language 'plpgsql'

$$

DECLARE var_sql text := 'CREATE TABLE data_import('

|| string_agg('field' || i::text || ' varchar(255)', ',') || ');'

FROM generate_series(1,10) As i;

BEGIN

EXECUTE var_sql;

END;

$$ ;

|